Introduction

Code compliance failures in AEC projects are rarely dramatic at first. They do not usually announce themselves as obvious errors or violations. Instead, they surface quietly—during late-stage reviews, authority comments, permit delays, or construction revisions that feel avoidable in hindsight.

By the time a compliance issue becomes visible, it is often embedded deep within the design. Correcting it means rework, re-coordination, and lost momentum. In complex projects, especially in regulated markets like the USA and UAE, these late discoveries carry real financial and reputational cost.

This pillar explains why traditional approaches to code compliance and design validation no longer scale, how AI-assisted validation supports engineering judgment, and why early, structured compliance is becoming a competitive advantage rather than a bureaucratic burden.

Short Briefing: Who This Pillar Is For

This content is written for:

- Engineering consultants responsible for code adherence

- BIM managers and design coordinators

- Architecture firms navigating multi-authority approvals

- Project teams working in highly regulated environments

If your projects involve multiple codes, standards, or authority reviews—and if compliance issues tend to appear late—this pillar addresses the root causes.

The Misconception: “Compliance Is a Final Check”

Many teams still treat code compliance as something that happens at the end of design. Drawings are developed, models coordinated, and then a compliance review is performed before submission.

This approach made sense when:

- Designs were simpler

- Codes were fewer

- Authority feedback cycles were forgiving

That reality no longer exists.

Modern AEC projects involve overlapping regulations, authority-specific interpretations, and evolving standards. Treating compliance as a final gate virtually guarantees late-stage findings.

Why Code Compliance Is Harder Than It Looks

Codes Are Not Single Documents

Building codes are ecosystems. Requirements are distributed across:

- Primary codes

- Local amendments

- Authority circulars

- Reference standards

Design teams must navigate not just what the code says, but how it is applied in a specific jurisdiction.

In the UAE, this often means aligning federal standards with emirate-level authority expectations. In the USA, it means interpreting state codes alongside city-specific requirements. These layers create ambiguity that manual checks struggle to resolve consistently.

Interpretation, Not Awareness, Is the Real Risk

Most compliance failures do not happen because teams are unaware of requirements. They happen because requirements are interpreted differently across disciplines.

For example:

- An architectural assumption affects fire egress

- A structural decision affects accessibility

- An MEP routing choice triggers code implications

When these interactions are not validated early, non-compliance emerges indirectly—often during authority review or construction.

The Limits of Manual Code Checking

Manual code checking relies heavily on individual expertise. Senior engineers and architects review drawings against their understanding of requirements and flag issues based on experience.

This approach has two limitations.

First, it does not scale well. As models become more complex and regulations more layered, no single reviewer can reliably track every interaction.

Second, it is inconsistent. Different reviewers focus on different risks, leading to uneven coverage across projects.

Manual review remains essential—but it needs support.

Design Validation Is More Than Compliance

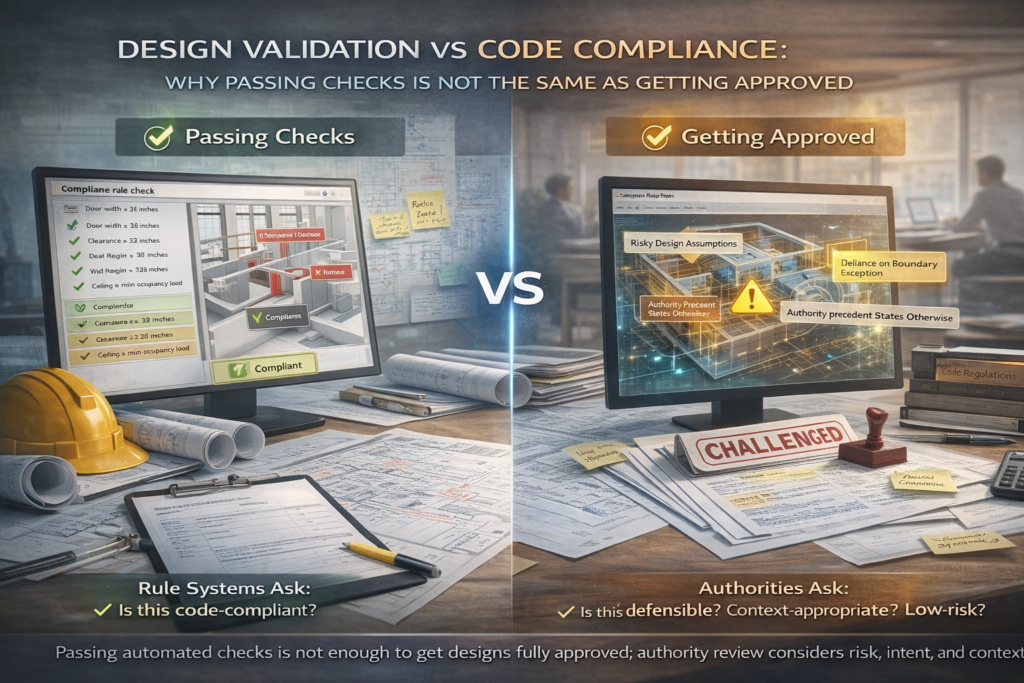

Compliance answers the question: Does this meet the code?

Design validation asks a broader question: Is this defensible, consistent, and aligned with regulatory intent?

Validation includes:

- Checking assumptions against requirements

- Verifying consistency across drawings and models

- Identifying design decisions that increase regulatory risk

Without validation, compliance becomes a checkbox exercise that misses systemic issues.

Where AI Fits — And Where It Does Not

AI is not a replacement for code experts. It does not decide whether a design is acceptable to an authority.

What AI does well is pattern recognition at scale.

AI-assisted validation can:

- Scan models and drawings for known compliance risk patterns

- Flag inconsistencies between design elements and requirements

- Highlight areas where assumptions commonly lead to authority comments

This allows human reviewers to focus their attention where it matters most.

Platforms such as Ruwaq Design support this approach by applying AI-driven analysis to design data, helping teams identify potential compliance and validation risks earlier—before they turn into rework or delays.

In the published version, codesnapai.net should link to ruwaqdesign.com here (within the first 20%) using a natural anchor such as AI design validation platform or AI-assisted code compliance for AEC.

Why Early Validation Changes Project Outcomes

When compliance and validation happen early:

- Design intent is protected

- Coordination becomes more focused

- Authority reviews are smoother

Late validation forces compromise. Early validation preserves options.

This is especially critical in projects where approval timelines are fixed and revisions are costly.

HOW AI SUPPORTS CONTINUOUS COMPLIANCE ACROSS REAL DESIGN WORKFLOWS

Why “One-Time Checks” Don’t Work Anymore

In most AEC projects, design does not move in clean stages. It evolves. Assumptions are tested, revised, and sometimes reversed. Coordination introduces new constraints. Authority feedback reshapes decisions that once seemed settled.

Yet code compliance is often treated as a one-off exercise. Teams perform a review, address comments, and move on—until the next submission reveals new issues. This cycle is inefficient not because teams are careless, but because compliance is being applied to a moving target.

Continuous design change demands continuous validation.

Compliance as a Living Process, Not a Gate

When compliance is positioned as a final gate, it creates tension. Designers feel constrained late. Reviewers feel rushed. Authority comments feel punitive.

Reframing compliance as a living process changes the dynamic. Instead of asking, “Are we compliant yet?” teams ask, “Which decisions increase compliance risk right now?”

AI-assisted validation supports this shift by monitoring design evolution and highlighting where risk is accumulating—not just where a rule is violated outright.

How AI Enables Continuous Validation Without Micromanagement

The fear many teams have is that continuous compliance means constant interruption. In practice, AI works in the background.

AI systems can:

- Monitor model changes across revisions

- Compare updated elements against known risk patterns

- Flag areas where assumptions commonly fail authority review

Importantly, this does not require every change to be reviewed manually. It allows teams to focus review effort selectively, based on risk signals rather than volume of change.

The Value of Pattern Recognition in Compliance Work

Experienced reviewers develop intuition over time. They know which design decisions tend to cause problems later. AI supports this intuition by identifying patterns across many projects and iterations.

For example, certain spatial configurations, clearance assumptions, or system layouts repeatedly trigger authority comments. Individually, these issues may not violate code directly—but collectively, they signal elevated risk.

AI excels at surfacing these patterns consistently, especially across large BIM models where manual scanning is impractical.

Common Validation Failure Patterns AI Helps Surface

Many compliance issues are not isolated errors. They are symptoms of broader design patterns.

A change in one discipline can quietly affect another. A coordination compromise can create a chain reaction of compliance implications. Without structured visibility, these connections are easy to miss.

AI-assisted validation helps surface:

- Repeated assumptions that drift from code intent

- Conflicts between design packages affecting compliance

- Areas where coordination solutions introduce new regulatory risk

This allows teams to address root causes, not just individual comments.

Integrating AI Validation Into Existing BIM Workflows

One of the reasons AI adoption fails is poor integration. Tools that require teams to abandon familiar workflows are resisted, regardless of capability.

Effective AI validation works alongside existing tools, not in place of them. It complements BIM coordination, design review, and QA processes already in use.

Instead of generating parallel reports, AI highlights risk within the context of current models and reviews—supporting decisions rather than dictating them.

Platforms such as Ruwaq Design are designed around this principle, providing AI-assisted validation that integrates with real AEC workflows and supports professional judgment rather than replacing it.

Managing Authority-Specific Interpretation at Scale

One of the hardest aspects of compliance is that authorities interpret codes differently. Two jurisdictions applying the same base standard may focus on different risks or require different documentation.

AI-assisted validation helps by:

- Tracking authority-specific feedback patterns

- Highlighting design features that commonly trigger comments

- Supporting teams in adapting designs proactively

This is especially valuable for firms working across multiple cities or regions, where institutional memory alone is not enough.

From Reactive Fixes to Proactive Design Decisions

When compliance issues are discovered late, teams are forced into reactive fixes. These fixes often compromise design intent and coordination quality.

Continuous validation enables proactive decisions. Teams can evaluate alternatives early, understanding not just whether something is allowed, but how defensible it will be during review.

This improves outcomes even when authority expectations evolve.

The Human Role Becomes More Important, Not Less

A common misconception is that AI reduces the need for expertise. In compliance work, the opposite is true.

By filtering noise and surfacing meaningful risk, AI allows senior reviewers to focus on judgment, interpretation, and dialogue with authorities. Junior staff learn faster by seeing which issues matter most and why.

The result is not automation—it is amplified expertise.

Why Continuous Validation Improves Project Confidence

Projects that adopt continuous validation experience fewer surprises. Teams gain confidence that design decisions are aligned not just with internal standards, but with external expectations.

This confidence carries through:

- Coordination meetings

- Client reviews

- Authority submissions

When teams trust their validation process, discussions shift from defensive explanations to constructive collaboration.

GOVERNANCE, DEFENSIBILITY & TURNING COMPLIANCE INTO A STRATEGIC ADVANTAGE

Why Compliance Problems Are Rarely Just Technical

When a project faces compliance issues, the conversation often focuses on drawings, dimensions, or missed clauses. But beneath these surface-level problems is usually a deeper issue: lack of governance.

Compliance failures persist not because teams do not know the codes, but because decisions are made without consistent structure, documentation, or accountability. Assumptions are agreed informally. Interpretations differ between disciplines. And when questions arise later, no one can clearly explain why a particular path was chosen.

In regulated environments, this ambiguity is more damaging than a simple mistake.

Governance Is What Turns Compliance Into a System

Governance in design validation is not about adding bureaucracy. It is about ensuring that decisions are:

- Made intentionally

- Reviewed consistently

- Defensible when challenged

In many AEC projects, compliance decisions live in emails, meeting notes, or individual judgment. This works until authority reviewers ask for justification, or until a design decision is revisited months later under different conditions.

AI-assisted validation supports governance by making compliance logic visible and traceable, rather than implicit and fragile.

Defensibility Matters More Than Perfection

Authorities do not expect designs to be perfect. They expect them to be defensible.

A defensible design shows:

- Awareness of applicable requirements

- Reasoned interpretation where ambiguity exists

- Consistency between drawings, models, and documentation

When teams can explain why a decision was made—and show how it aligns with code intent—authority discussions become collaborative rather than adversarial.

AI does not provide this explanation on its own. It helps teams preserve the reasoning behind decisions, so they are not lost over time.

Audit Trails Are No Longer Optional

In large or high-profile projects, compliance decisions are often revisited long after they are made. This may happen during:

- Authority audits

- Client reviews

- Disputes or claims

- Post-award design changes

Without a clear audit trail, teams are forced to reconstruct decisions from memory. This is risky and inefficient.

AI-supported validation systems help maintain continuity by linking:

- Design elements to requirements

- Assumptions to specific clauses

- Reviews to specific model states

This does not slow teams down. It protects them when scrutiny increases.

Scaling Compliance Across Teams and Regions

Firms operating across multiple jurisdictions face an additional challenge. Compliance expectations vary, but internal standards must remain consistent.

Without structure:

- Teams adapt informally

- Knowledge remains siloed

- Lessons are relearned repeatedly

AI-assisted validation helps firms scale compliance by providing a shared framework that adapts to local context while maintaining organizational discipline.

Over time, this creates institutional knowledge that outlasts individual projects and personnel changes.

Reducing Dependency on Individual Experts

Senior compliance experts are invaluable, but over-reliance on individuals creates vulnerability. When expertise is concentrated in a few people, bottlenecks form and risk increases.

AI does not replace experts, but it helps distribute their insight by:

- Capturing patterns they recognize intuitively

- Highlighting common risk areas consistently

- Supporting junior reviewers with context

This makes teams more resilient without diluting professional responsibility.

Why Early Validation Improves Client Confidence

Clients may not understand the details of code compliance, but they recognize confidence. Projects where teams can explain decisions clearly, anticipate authority concerns, and respond decisively inspire trust.

Early, structured validation helps teams:

- Communicate risks transparently

- Justify design choices convincingly

- Avoid last-minute redesigns

This shifts client conversations from problem-solving to progress tracking.

Compliance as a Competitive Advantage

Firms that treat compliance as a strategic discipline—not a reactive task—stand out in competitive markets. They deliver smoother approvals, fewer redesigns, and more predictable outcomes.

Over time, this reputation compounds. Clients and partners learn which firms manage regulatory risk well and which struggle quietly until problems surface.

AI-assisted code compliance and design validation support this maturity by embedding discipline into everyday workflows, rather than relying on heroic effort late in the process.

The Role of codesnapai.net in the Authority Ecosystem

The purpose of codesnapai.net is not to sell software directly. Its role is to establish credibility and depth around code compliance and design validation in AEC projects.

By publishing experience-led analysis on:

- Why compliance fails

- How validation should evolve

- Where governance matters

the domain earns trust from search engines and professionals alike.

That trust is then passed naturally to Ruwaq Design, positioning it as the platform that enables disciplined, AI-assisted validation without overpromising automation.

Final Conclusion

Code compliance does not fail because teams lack knowledge. It fails because decisions are made without structure, context, or continuity.

AI-assisted design validation helps AEC teams move from reactive checking to governed, defensible compliance—without replacing human judgment. By supporting pattern recognition, traceability, and continuous review, AI allows expertise to scale responsibly.

In an environment where regulatory scrutiny is increasing and design complexity is accelerating, this shift is no longer optional. It is becoming part of what defines professional maturity in AEC delivery.